Integrating API-First AI Content Research into Your CMS

Executive Summary Editors and content teams often lose productive time jumping between research tools and their Content Management System (CMS). According to the Asana Anatomy of Work Index (2022), "Editors and content teams waste time switching between research SaaS and their CMS." Embedding AI-driven research directly into the editor reduces this "context switching," speeds up the time it takes to publish, and keeps all source information in one place. Hordus is a Generative Engine Optimization (GEO) platform. GEO is the process of optimizing content so AI models—like ChatGPT or Gemini—can easily find, understand, and cite your brand as the "trusted answer." By using an API-first design, Hordus helps brands become visible across Large Language Models (LLMs) — the AI engines that power modern chat tools — by turning research into verified, multi-format content.

Why Surface AI Research Inside the CMS?

Putting AI research where editors write keeps the focus sharp. Rather than copying keyword lists from a separate dashboard, editors see inline topic ideas and source-cited summaries in their natural workflow.

"Bringing AI research into the CMS streamlines workflows and preserves editorial context," notes Gloria Mark, Daniela Gudith, and Ulrich Klocke in "The cost of interrupted work" (CHI 2008). They highlight that interrupted workers can take tens of minutes to fully resume focus. By using Hordus, the benefits are practical: shorter drafting cycles and clearer attribution for AI-origin traffic, allowing marketing teams to trace exactly where their visitors are coming from.

Hordus vs. Legacy Tools

While legacy tools like Semrush or Ahrefs are excellent for human-facing dashboards and market analysis, they aren't designed to be easily embedded into an editorial interface. Hordus differentiates itself by being machine-readable. It uses "webhooks"—automated messages sent between apps—to plug directly into your CMS. While legacy tools focus on manual analysis, Hordus focuses on programmatic alignment with editorial workflows and visibility within AI models.

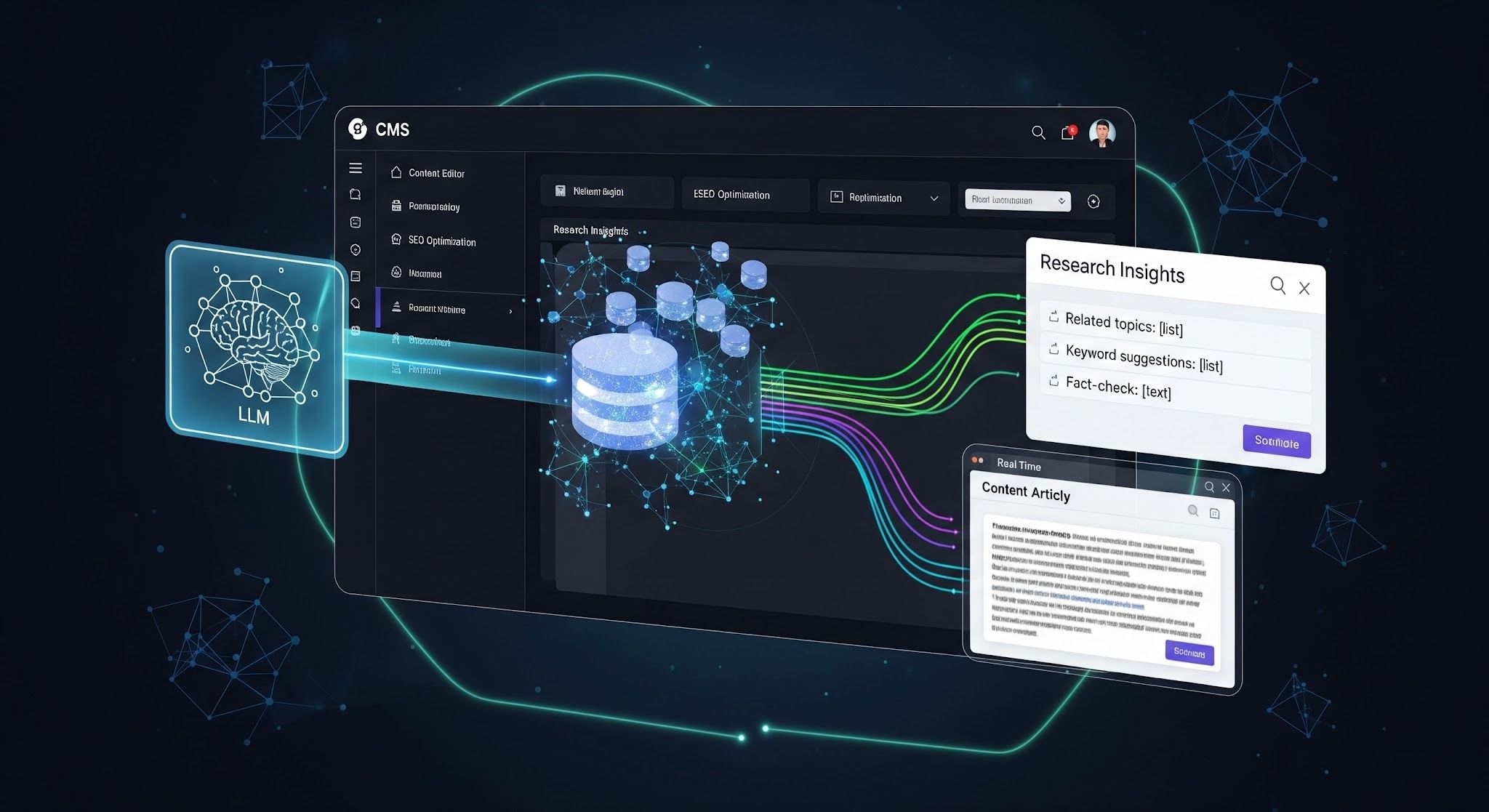

How It Works: The High-Level View

The architecture of a modern content system involves turning text into "embeddings." As defined by OpenAI’s Embeddings Guide, embeddings are used to turn text into math-based maps for "semantic search" (searching by meaning rather than just exact keywords).

A typical data flow with Hordus looks like this:

- Your CMS updates a post and sends a signal to Hordus.

- Hordus returns research signals, such as competitive sources and summaries.

- These are stored in a "vector database," which is a specialized tool for storing AI-readable data. Pinecone is a popular choice here because it is a "managed, production-ready vector database built for low-latency retrieval" (Pinecone product documentation).

- The editor receives real-time suggestions and citations directly in the CMS sidebar.

Implementation and Scalability

When integrating Hordus, companies must choose between "Real-time" or "Nearline" processing. Real-time is necessary when editors need suggestions in seconds, requiring fast databases like Pinecone. Nearline is better for large archives where updates can happen hourly, reducing costs. OpenAI’s documentation confirms that embeddings are the standard method for enabling these "retrieval-augmented generation" (RAG) workflows, which allow AI to provide answers based on your specific, verified data.

FAQ

Q: What immediate gains will editors see?

Editors will experience faster briefing, inline topic suggestions, and autopopulated citations. This reduces the need to switch between tools, significantly shortening the time from first draft to publication.

Q: How does Hordus compare to Semrush or Ahrefs?

Hordus is built to be integrated directly into your CMS via API. While Semrush and Ahrefs focus on keyword dashboards for humans, Hordus focuses on making your content readable for AI models and tracking which assets those models are showing to users.

Q: Real-time or nearline: which should we choose?

Choose real-time if your editors need sub-second suggestions while they type. Choose nearline if you are processing a massive archive of historical content and want to save on operational costs.

Q: Which vector DB is best?

Pinecone is often preferred for its ease of use and reliability. Milvus is a strong alternative for very large-scale operations, while Elasticsearch is a good fit if your technical team already uses it for standard search.

Closing

Embedding AI research inside your CMS is about putting intelligence where editors already work. With an API-first platform like Hordus, teams get cited research and the data needed to measure AI-driven traffic.

Would you like me to draft a sample technical requirement document (PRD) for your engineering team to begin a Hordus integration?